The global economy was once fueled by industrialization. Today it’s fueled by knowledge and information. Major technological advances and platform shifts have accelerated this transition—in the 1990s it was the Internet. The 2000s brought the era of cloud computing. The 2010s gave rise to smartphone ubiquity. What were once emergent platforms went on to broaden access to knowledge and transformed the way people communicate, create, and consume content.

We predict we’re on the brink of the next platform shift: The knowledge and information economy will be defined by artificial intelligence.

Already, advances in Large Language Models, or “LLMs,” and other generative ML tooling are streamlining content creation. LLMs are complex neural networks that can generate text. They underpin systems like OpenAI’s GPT-3 (text) and Google’s LaMDA (conversational dialogue), and helped inspire OpenAI’s DALL-E and Midjourney (text-to-image).

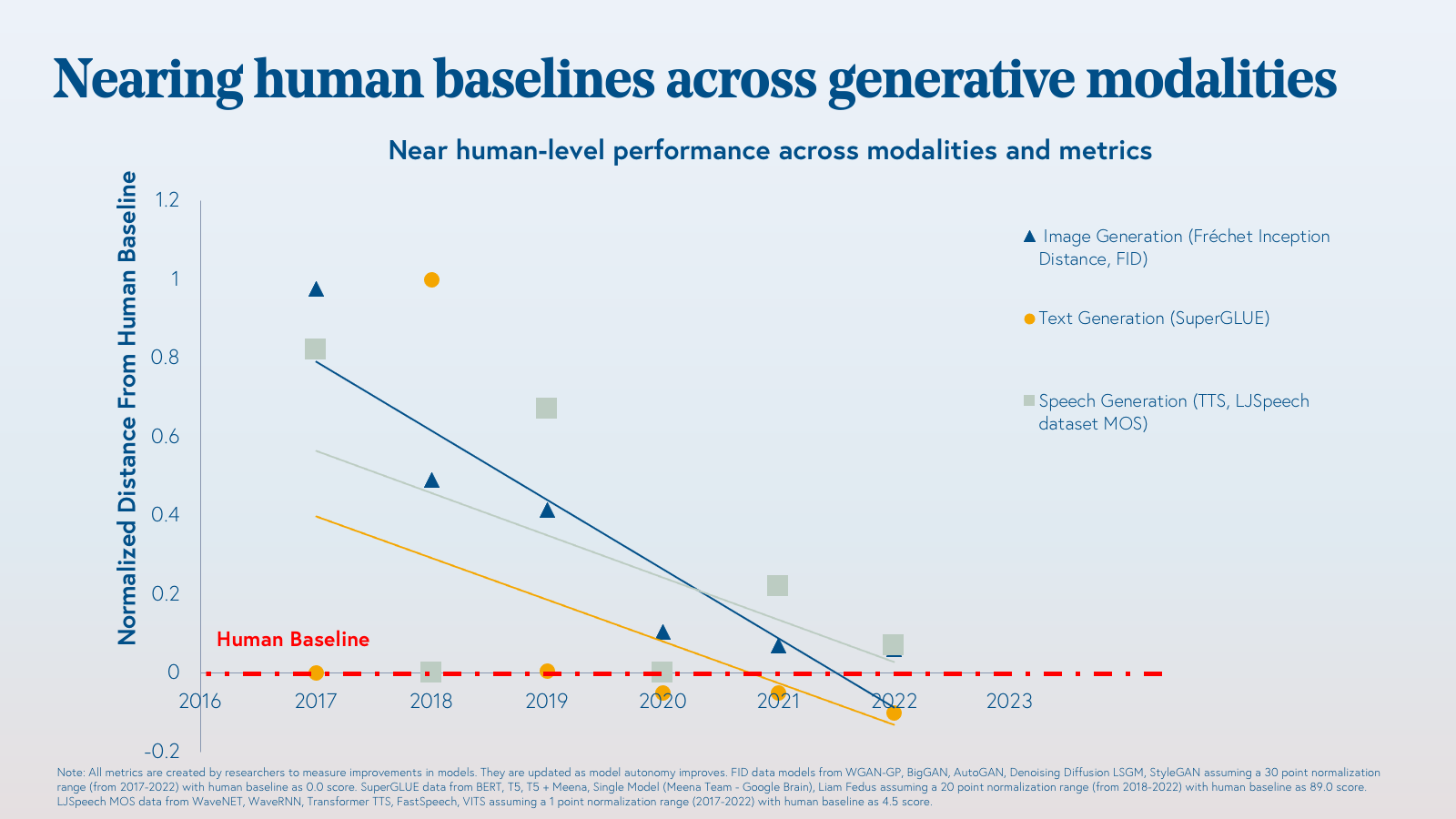

LLMs have been increasing an average of 10x per year in size and sophistication. The result: Modern AI can autonomously generate content—be it text, visual, audio, code, data, or multimedia—on par with human benchmarks.

We made a quick visualization to demonstrate the GPT improvements that you can run here: https://nagdab.github.io/bvp_gpt3_iframe/

Language models are progressively becoming the cognitive structure of real-world AI. And we’re witnessing a promising network effect—improvements in large language models tend to flow into downstream tasks and multi-modal models that span text, video, audio, image, code, and beyond. The advances are snowballing and we are approaching an inflection point.

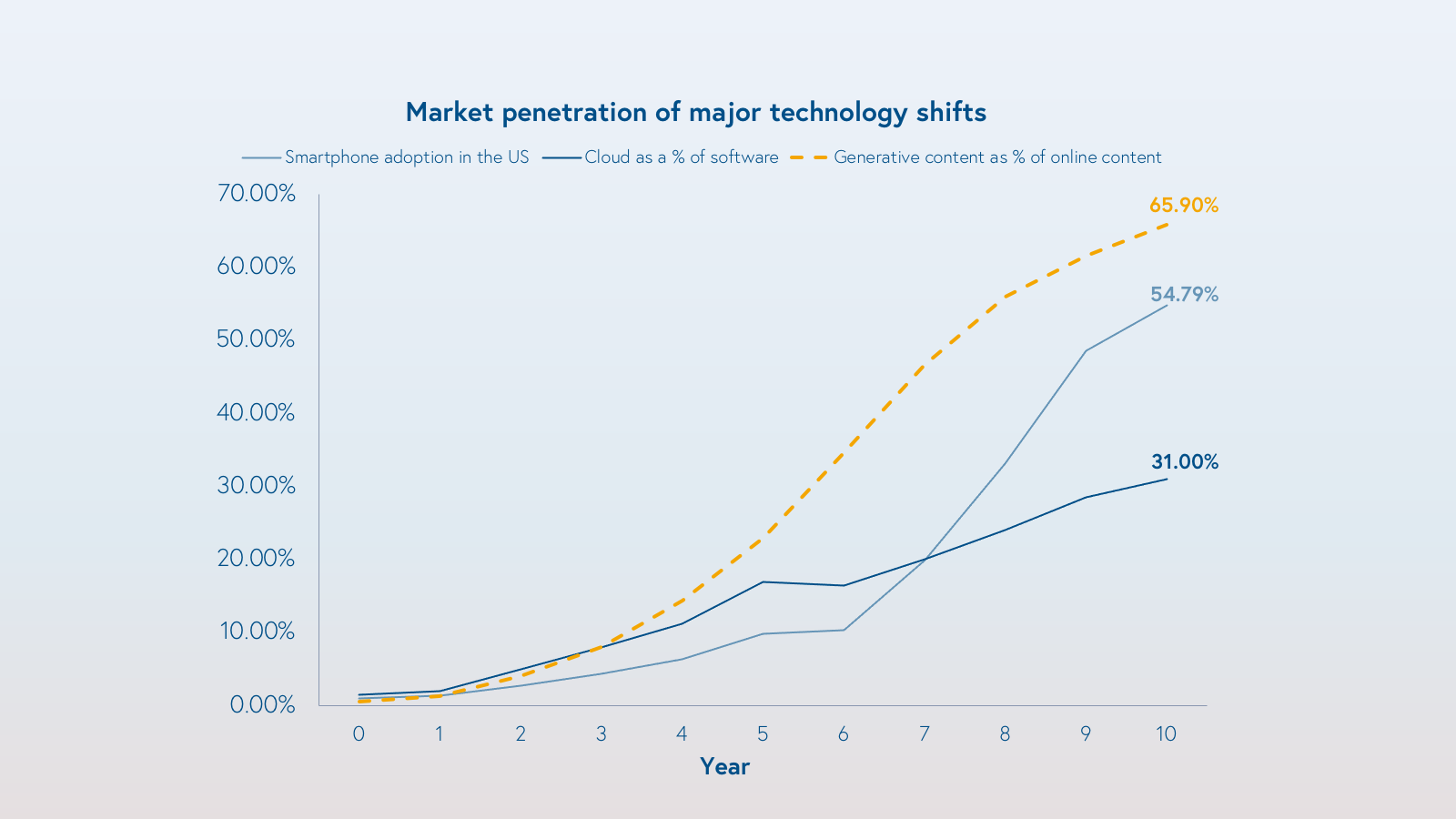

Today, less than 1% of online content is generated using AI. Within the next ten years, we predict that at least 50% of online content will be generated by or augmented by AI.

We are already seeing this trend materialize across industries. Applications of generative AI are being applied across a number of skill sets and industries, and in a few instances they’re already maturing. In the copywriting space, companies like Pepper Content, Jasper, and Copy.ai are supercharging marketing content; with Jasper, marketers write 5x faster. For programming automation, Github’s Copilot, a collaboration with OpenAI, has amassed over 1.2 million users in the last year alone. Likewise, Amazon launched CodeWhisperer, its LLM-based code generation tool just last week.

Generative AI and LLMs are the foundation of an important paradigm shift in content creation, communication, and knowledge generation. Just as cloud computing and smartphones transformed industries and created entirely new ones, so too will generative AI. In ten years, cloud computing grew from less than 5% of software spend to approximately 30%. Similarly, US smartphone penetration went from 1% to 55%. Generative AI has broad application across media and communications to software to life sciences and beyond. In many use cases, it is both lower cost and higher value, so we think adoption could be even faster.

What underpins the acceleration of generative AI?

1. Performance is approaching human baseline

The power, accessibility, and robustness of generative ML is growing. Many generative models are nearing if not surpassing human benchmarks on certain metrics.

The pace of advancement is awe-inspiring. The improvements are a result of decades of ML and computing innovation and technological advances including:

- Hardware: The combination of Moore’s law and Denard scaling, combined with parallelization and improved GPU architectures allows us to train larger models with greater amounts of data.

- Data architecture: The advent of the transformer, a model architecture, has catalyzed the rapid development of and success of LLMs. The ‘self-attention’ capability of transformers allows them to learn long-range connections and ultimately find versatile use across domains, including computational biology (e.g. AlphaFold2) and natural language (e.g. transformer-based LLMs).

- Few-shot learning: LLMs benefit from few-shot learning: They can perform on certain tasks with little to no specific training. Domains where data is scant may now be in play. These factors will accelerate innovation and startup creation, as labeling large datasets has traditionally been a bottleneck and cost center for companies. The few-shot paradigm improves the bootstrapping economics for startups.

2. Large Language Models benefit from a flywheel effect

As large language models improve, we are seeing the advances flow to downstream tasks and multi-modal models. These are models that can take multiple different input modalities (e.g. image, text, audio), and produce outputs of different modalities. This is not unlike human cognition; a child reading a picture book uses both the text and illustrations to visualize the story.

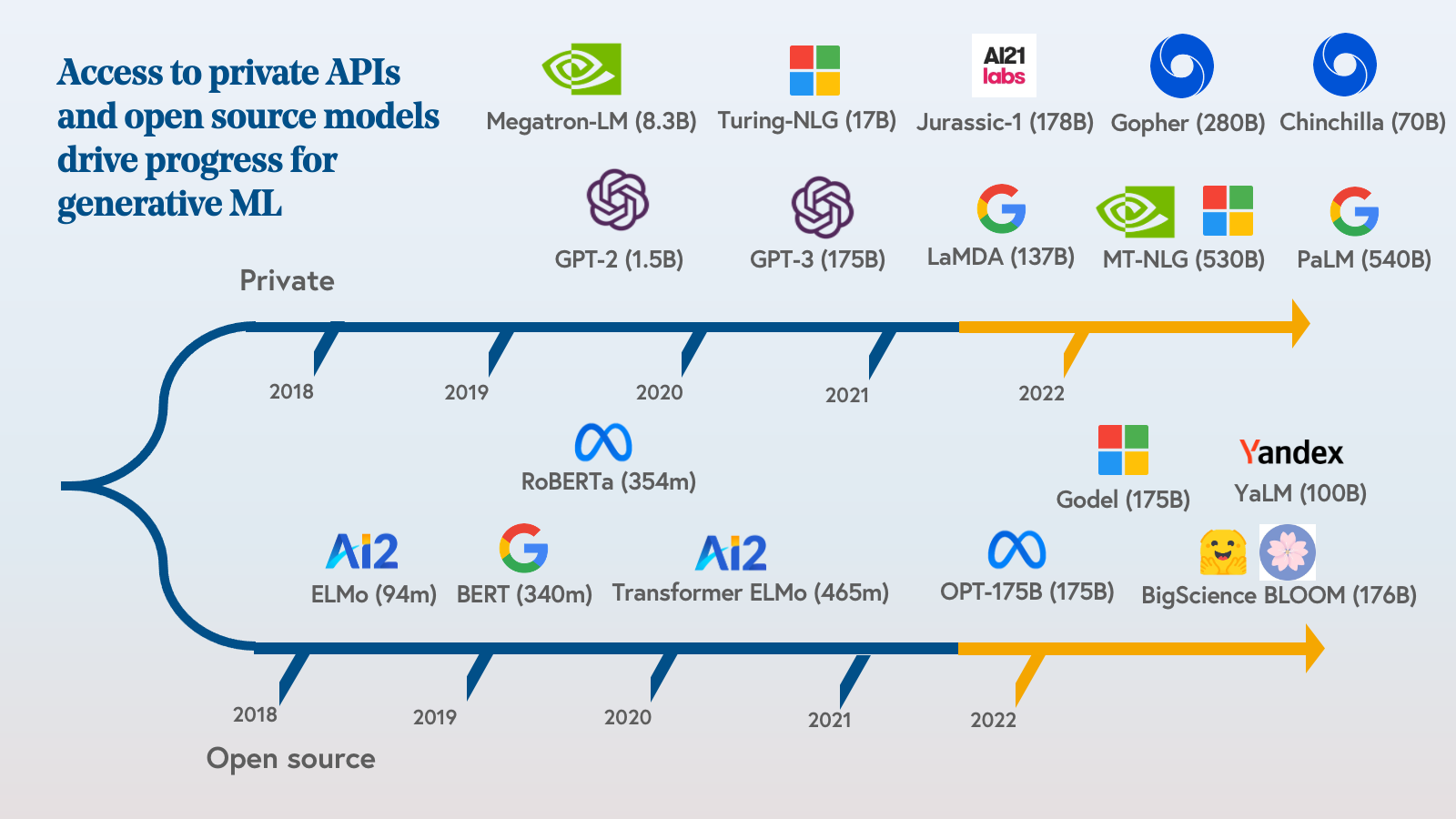

3. Accessibility

Unlike years past, developers can now access private APIs (e.g. OpenAI, AI21 Labs) and open source models for generative ML. Just in the last month, both Meta and Huggingface have sponsored open source models. The timeline below provides a snapshot of the acceleration in activity.

As we reach an inflection point for commercial and developer access, guardrails for policy, ethics, and law are being constructed. At Bessemer, we consider ethical AI deployment to be both a business and a social imperative. We are emboldened by the growth in policymaking, monitoring, and self-policing of the technology to protect people and systems from misuse and harm.

We’re in the early days of a fundamental shift in the way we leverage and scale human intelligence. We are eager to partner with founders building products and services that leverage or broaden access to generative AI.

If you’re building for our AI-generated future or supporting the advancement of this ecosystem, we’d love to hear from you! Please reach out to us @taliagold, @lucypless, @bhavikvnagda.

**This piece was co-authored with Bhavik Nagda and Lucy Pless and originally posted on the Bessemer blog**

1 Comment