Nine months ago, I gave a presentation about value capture in AI. I outlined a working hypothesis on where value would accrue and the forces at play across the application, platform, and model layers. But I left a glaring hole.

The biggest beneficiary is not the compute or chip providers. Not the foundation model or application layer. Nor the incumbent platforms.

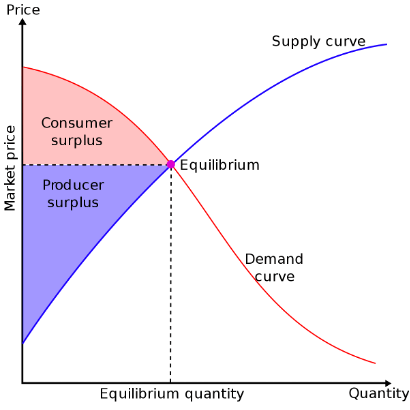

It is you. The consumer. Consumers and end users will capture most of the value generated by AI. New profit pools are being created, but they will prove shallower than one might imagine.

AI is generating massive consumer surplus. In other words, the value of the efficiency gains and experiences that AI enables far exceeds its market price. And this dynamic is just getting started.

Outsized value capture is the result of strong moats. And where are the moats in AI?

- Foundation models are primarily built on public data. With synthetic data, transfer learning, and other techniques, the value of private data is limited.

- The open-source ecosystem is thriving, making AI extremely accessible and affordable. Case in point: Alphafold. It predicts protein structures and is delivering groundbreaking advance for life sciences. It is broadly adopted and hugely valuable. But as an open-source offering, the value it generates shows up as consumer surplus. Open-source drives down prices and challenges centralized moats.

- Prices are plummeting as performance is improving. Thanks to scaling laws, compute cost curves, and architectural improvements, etc. Deep-pocketed capable competitors like OpenAI & Google drive down prices as they compete for share. Sure, it costs $$$ to train large models, but it isn’t *that* expensive. Training a GPT-3 performance model is <$500k, an order of magnitude decrease in two years.

- AI features are becoming table stakes. Take AI writing as an example and look at Notion, Google, Microsoft, etc. that integrate these features for free in their suites. Apps are rushing to embed the AI into their products. They must to stay competitive. But everyone has access to the same AI enablers and features, and all value is passed on to the customer. In fact, it often costs the producer more to serve this functionality than the incremental revenue (if any) it generates.

- What about RLHF and moats from proprietary usage data? See point #1 above. I also like this take from Eliezer Yodkowsky. It isn’t clear RLHF provides *enough* differentiation on its own. I see RLHF as a secondary moat and the result of a distribution advantage or network effect. That is the real moat. RLHF alone is bupkis unless you’ve cracked the chicken and the egg problem in a sustainable manner. Midjourney embodies this.

AI is becoming extremely affordable, accessible, and available. We’re on the brink of unprecedented productivity gains driven by AI, particularly for white collar services and jobs. Productivity gains usually = more revenues for the same level of input, which = higher GDP. But ironically, AI is pushing prices downwards. We are on a trendline towards $0.

Lina Kahn must be pinching herself.

All the traditional moats – scale, network effects, switching costs, etc. – are as valid as ever. But it isn’t clear that “AI native” applications or platforms have any natural producer surplus or winner-take-most dynamics. Rather the opposite seems true. The overwhelming beneficiary of the AI revolution is the end user and consumer.

This dynamic reminds me of a favorite Charlie Munger story, where he explains how certain technology innovations results in consumer surplus. Investors beware.

And so, my obsession with distribution and network effects persists. We’ve been living in an era where Google, Apple, Amazon, and Meta are the primary gatekeepers for internet distribution. AI isn’t clearly changing that, at least not yet, but it could. AI enables new user experiences – replacing GUIs with natural language interfaces. Search vs. ChatGPT is the first mainstream example. And a revolutionary user interface can upend the status quo. Just ask Steve Jobs.

1 Comment